What is load balancing?

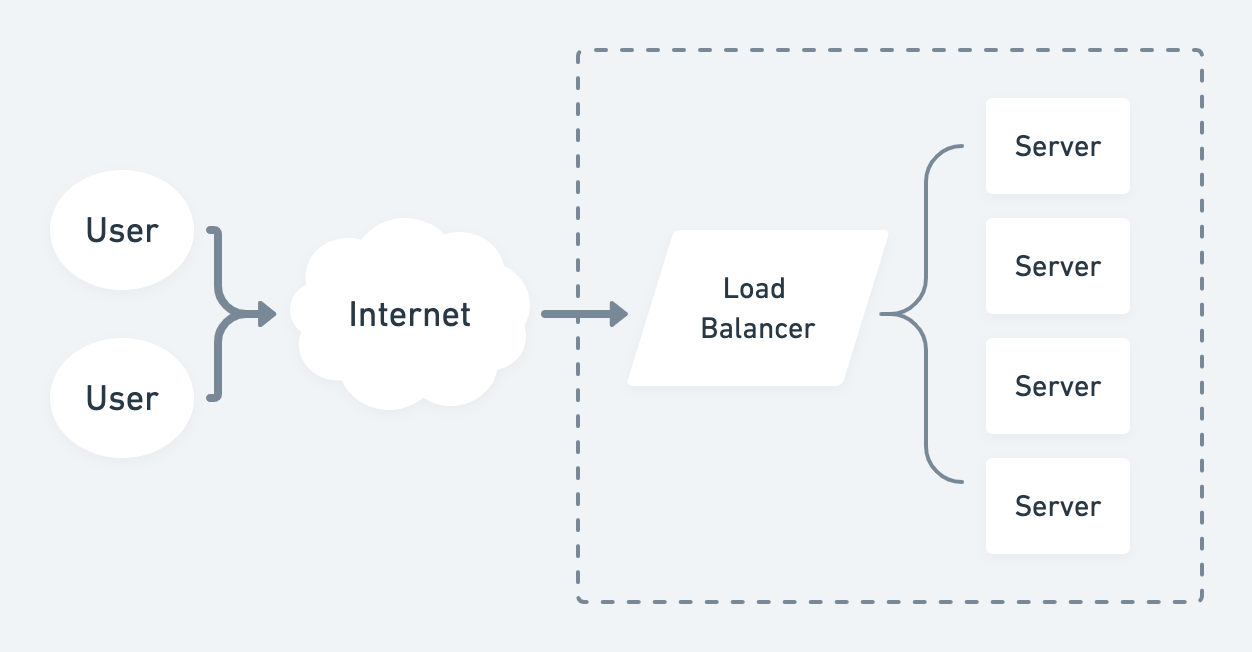

A load balancer is a type of server that sits between external web traffic and backend servers. It receives incoming requests and distributes traffic across two or more servers.

Every server has a maximum resource capacity or workload that it can handle. When a server reaches its maximum capacity, it can be scaled in two ways:

- More resources such as processing power, memory, or RAM can be added to a server up to a finite amount. This is known as vertical scaling.

- More servers are added. This is known as horizontal scaling.

Load balancing is used with horizontal scaling when you have two or more servers.

Here are some key benefits of using a load balancer:

- Minimize response time - the time it takes to respond to a request.

- Maximize throughput - increase the number of requests an application can handle.

- Scale quickly - add or remove servers based on request volume and system demand.

- Achieve higher availability - when a server has reached its maximum capacity, adding more servers keeps your application running and available.

- Avoid overload of a single resource - balance load across multiple servers.

- Monitor server health - route traffic to different servers if one is down.

What makes a good load balancer?

A well-designed load balancer is efficient, highly available, and secure.

Engineering teams often leverage a reverse proxy such as HAProxy or Nginx that is well-designed for managing and distributing web traffic.

Cloud providers also offer an out-of-the-box load balancing service, such as Amazon's Elastic Load Balancer (ELB).

While a single load balancer can be placed between external traffic and application servers, this is not always the case.

In a microservice architecture, you would use a load balancer in front of each service, allowing each part of the system to be scaled independently.

Load balancing can't replace good system design.

Adding more servers won’t fix issues related to inefficient calculations, slow database queries, or unreliable third-party APIs. These must be addressed independently.

Differences between rate limiting and load balancing

While often used together, load balancing should not be confused with rate limiting.

Load balancers improve the scalability, reliability, and performance of applications. Multiple servers are grouped together to distribute the load, ensure high availability, and minimize latency.

On the other hand, rate limiters prevent abuse from a single user or organization by intentionally throttling or dropping requests that exceed a specific threshold.

Rate limiting is a common mechanism for protecting applications against DDOS attacks which try to consume server resources, making applications unavailable.

Load balancing concepts for system design interviews

Understanding these key concepts and interview questions about load balancing will help you prepare for your system design interviews.

What is a load balancer? Why are they used?

A load balancer is a reverse proxy that receives web traffic and distributes it across multiple backend servers.

The main benefits of a load balancer are improved performance, scalability, and reliability. A load balancer can also help to minimize latency and reduce downtime by providing redundancy and failover capabilities.

Read More: Strategies for improving availability in system design interviews.

How does a load balancer decide which server to send requests to?

Load balancers typically route traffic using the following mechanisms:

- Round robin. Client requests are distributed to a list of servers in turn.

- Least connections. A request is sent to the server with the least open connections.

- IP hashing. A client request is always sent to the same server based on IP.

What are the types of load balancers?

Typically, load balancers come in two types:

Layer 4 (L4) load balancer

Layer 4 is the transport layer of the OSI model. It's where routing decisions are made. A Layer 4 (L4) load balancer is also known as a network load balancer.

Here, routing decisions are made based on network layer information.

L4 load balancers perform Network Address Translation (NAT) on the request packet. They don't actually inspect the packet's themselves, though.

This load balancer gets the most use and availability out of IP addresses, switches, and routers by spreading the traffic across them.

Layer 7 (L7) Load Balancer

Application Load Balancer (ALB) is a Layer 7 load balancer. It routes traffic based on the content of the request.

It is one of the oldest forms of load balancing and allows routing decisions to be made at the application layer (HTTP/HTTPS).

Read more: When should you use HTTP or HTTPS?

ALB can use HTTP header, cookies, uniform resource identifier, SSL session ID, and HTML form data to determine which server should handle a request. This makes it possible to direct requests to different servers based on factors such as user location or device type.

The benefit of using an Application Load Balancer is that it can provide a more efficient distribution of requests across multiple servers.

What is sticky session load balancing?

Sticky session load balancing uses IP hashing to map requests from a specific client IP to the same server. Subsequent requests from the same user are always sent to the same server for the user session duration.

Why is this helpful? All data related to a user session is stored on a single server instead of all servers. This enables session state and data consistency while reducing latency.

Understanding your network topology and traffic patterns while monitoring server performance to mitigate any bottlenecks is essential.

What is a reverse proxy server?

A reverse proxy server is an intermediary server that sits between client requests and backend servers. It forwards client requests to one or more backend servers and then returns the response to the client.

Here are some benefits of using a reverse proxy:

- Load balancing. A load balancer is an example of a reverse proxy as it receives web traffic and distributes it to multiple origin servers.

- Hide IP addresses. Reverse proxies hide and secure the IP addresses of your origin servers from external users. Clients can only interact with the reverse proxy itself.

- Act as a gateway. Reverse proxies allow you to access and serve content from multiple servers without exposing each resource directly on the internet.

- Caching. Reverse proxies can be used to cache content so it can be served faster and more efficiently. This reduces the load on origin servers and improves overall performance.

What is round-robin load balancing?

Round-robin load balancing is a popular algorithm for distributing client requests across multiple backend servers. Requests are cyclically assigned to servers, so every server takes its turn in receiving and processing a request.

Here are some variations of the round-robin algorithm:

- Weighted round robin. Weights are assigned to each server. If Server A has a weight of 1 and Server B has a weight of 2, then Server B receives double Server A's requests.

- Modified round robin. Weights are assigned to servers based on their time processing requests.

- Dynamic round robin. Weights are adjusted dynamically based on server load, allowing for efficient distribution of requests.

Using SSL load balancing

When using SSL (Secure Socket Layer) with load balancing, the load balancer must be configured to handle the decryption and re-encryption of data before it is sent to a backend server. This ensures secure communication between two endpoints.

When a request comes in, the load balancer must first decrypt it, determine which server should receive it, and then re-encrypt it before sending it off. This process adds additional latency to ensure data security.

If multiple SSL certificates are used for different domains or subdomains, the load balancer must be able to select the correct certificate for each request. This could be time-consuming, depending on how many certificates need to be managed.

While SSL load balancing doesn’t improve performance directly, its implementation adds complexity and latency, making it an important factor when designing system architecture.

Learn everything you need to ace your system design interviews.

Exponent is the fastest-growing tech interview prep platform. Get free interview guides, insider tips, and courses.

Create your free accountRelated Blog Posts

Design Food Delivery System like Uber Eats (Mock Interview)

System Design Interview Guide: FAANG and Startups

System Design Interview: Design a Rate Limiter