Complete Guide to Machine Learning Engineering Interviews

Machine Learning Exponent Team • Last updated

Exponent Team • Last updated

Do you have an upcoming machine learning interview?

This is an ML engineer interview guide that covers core machine learning concepts you should know before going into interviews and what to expect during the interview process.

Who wrote this guide?

This guide was written with the help of Angelica Chen, a machine learning Ph.D. candidate at NYU's Center for Data Science.

Her research interests broadly revolve around training language models (LMs) for code generation, particularly with human feedback, and improving the evaluation of LMs. This includes long-context question answering and addressing social biases.

Previously, she worked as a researcher at Google Brain and as a student researcher at Google Research, where she contributed to training streaming models for disfluency detection.

Additionally, she served as a software engineer at Google Search, where she developed neural models for semantic parsing.

Her skill set includes researching, designing experiments, and implementing model training pipelines using PyTorch, TensorFlow, Jax, and Haiku.

She has interviewed for Google Brain, Meta AI Research, MosaicML, and Microsoft Research.

Neural Language Processing and Computer Vision Interviews

Break down your prep into these focus areas to prepare for an upcoming interview.

Part 1: Machine Learning Fundamentals

Before practicing for your interviews, review the fundamentals of machine learning.

- Classification, regression, generation.

- Optimization: Types of loss functions like MSE, ranking loss, hinge, loss, cross-entropy, and regularization. Gradient and subgradient descent and back-propagation.

- Linear Models: Linear regression, generalized additive models (GAMs), and support vector machines (SVMs) are essential topics in machine learning. They help build an intuition for other models.

- Tree methods: Bagging, boosting, forward stagewise additive modeling, Adaboost, decision trees, and random forests.

- Unsupervised Learning: Clustering techniques such as k-means, hierarchical clustering, and mixture models, as well as expectation-maximization (EM) algorithms, and dimensionality reduction methods such as principal component analysis, non-negative matrix factorization, and singular value decomposition (SVD).

- Recommendation Systems: Content-based filtering and collaborative filtering are two popular recommendation techniques. Matrix factorization is a commonly used method for collaborative filtering. Another approach to recommendation is retrieval, followed by ranking.

- Deep learning: Basic neural network architectures such as MLPs, RNNs, and convolutional nets, as well as best practices for training. This includes using a training/validation/testing split, tracking multiple metrics on the validation dataset, using dropout, warming up the learning rate, applying weight decay, and monitoring for convergence, overfitting, or double descent.

Part 2: Machine Learning for NLP

Next, spend time with neural language processing to understand how to build and fine-tune models.

- Fundamentals of NLP: Distributional hypothesis, word vector representations (such as GloVE and word2vec), basic NLP models (such as n-gram models and bag-of-words), and types of classic NLP tasks (such as semantic parsing, dependency parsing, translation, understanding, and natural language inference).

- Basics of Sequence Modeling: Mapping language to continuous representations/embeddings, recursive and recurrent neural networks (such as LSTMs, GRUs, and RNNs), and evaluation metrics.

- Attention and Transformers: The basics of the self-attention mechanisms and the Transformer architecture, encoder versus decoder transformers, and auto-regressive decoding strategies (such as greedy decoding, beam search, temperature sampling, and nucleus sampling).

- Pre-training and fine-tuning: Training objectives (such as MLM, CLM, denoising, NSP, and contrastive) and best practices for each stage.

- Prompting/In-context learning: Few-shot learning, prompting (including standard techniques such as chain-of-thought, self-consistency, and least-to-most complex), prompt tuning, scaling laws, and common LMs that are used with prompting (such as GPT-3/4, Claude, GPT-J, and OPT).

Part 3: Machine Learning for Computer Vision

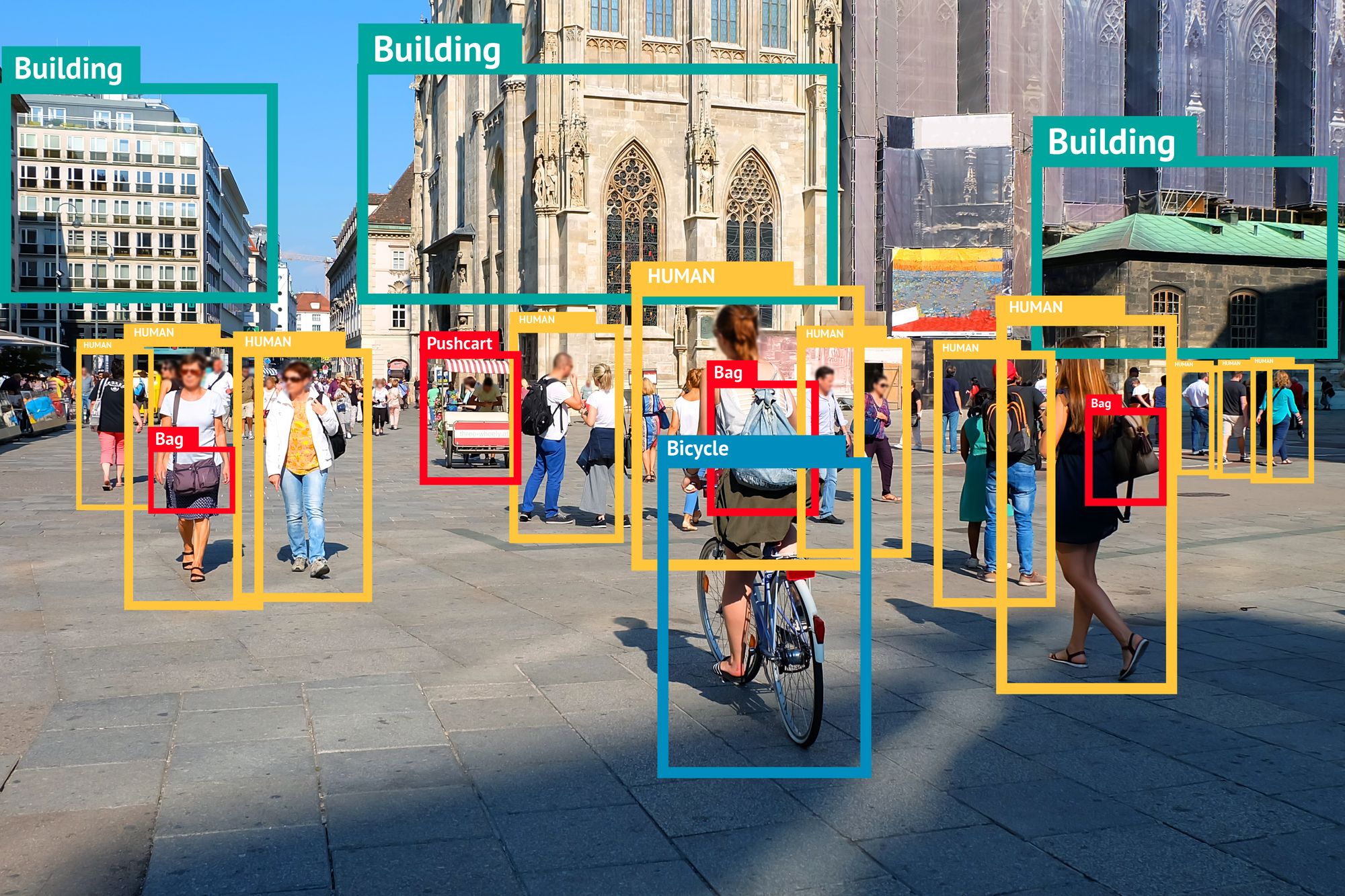

- Introduction to CV tasks: Image classification and image/video understanding.

- Perception: Convolutional nets (pooling, convolutions, image features, down-/up-sampling), object detection, and image/semantic segmentation.

- Multimodal understanding and generation: GANs, VAEs, and stable diffusion.

Part 4: Machine Learning System Design

Some sample questions that you might be asked during an ML system design interview include:

- Recommend artists to follow on Spotify

- Design an intelligent search system for Youtube

- Recommend add-on items for a cart on Amazon

- Recommend restaurants in Google Maps

- Design a system that filters out offensive content from online comments

- Design a model for Netflix that predicts watch time for a user

- Design a system for responding to customer support messages

Interview Structure

Different steps are involved in the ML research scientist and ML engineer interview loops.

- Recruiter screen (30 minutes): The recruiter briefly discusses the job expectations and assesses the candidate's potential fit for the role.

- ML coding interview (45 minutes): The candidate will be asked about their understanding of an ML framework (like TensorFlow, PyTorch) and a core ML concept relevant to the team's sub-field (such as transformers, convolutional nets, etc.). They will need to correctly implement a solution and explain its function within a broader system. A follow-up question may involve system extension to a more complex scenario.

- ML concepts interview (45 minutes): This involves discussing fundamental ML concepts with an ML engineer or scientist, exploring the candidate's research interests and abilities in experiment design and interpretation, and possibly asking questions related to the company's niche.

- ML system design (45 minutes): In this step, the candidate is asked to design an ML system from end-to-end, including pre-processing the data, training + evaluating the model, and deploying the model. They will be expected to know some of the more practical real-world aspects of productionizing an ML model, particularly concerning efficiency, monitoring, preventing harmful model outputs, and building inference infrastructure.

- Research job talk (60 minutes): Typically for Research Scientist roles, candidates present a one-hour talk on their past research. This involves explaining motivations, impact, and technical aspects, answering questions, defending their approach, and connecting it to relevant work in the field. It's somewhat like a simpler dissertation defense.

- Hiring manager (30 minutes): In this final step, the hiring manager usually has a short discussion with the candidate to assess whether their skills and working style are good with those of the team. ML research teams hire candidates who are particular matches for their team and have specialized expertise in that particular niche.

Interview loops can vary by company and may not include all these steps, but this gives a general idea of what an ML research scientist/engineer interview involves.

System Design Interview Framework

In the 45-minute ML system design interview, you'll design a complete system covering data pre-processing, model training and evaluation, and deployment.

Possible questions include designing systems for:

- Spotify recommendations

- Filtering offensive content on Youtube

- Predicting Netflix user watch time

- Responding to customer support messages

- Personalized LinkedIn job recommendations

These questions evaluate your ability to model business problems as ML problems and consider real-world production aspects like efficiency, monitoring, harm prevention, and inference infrastructure development.

A framework can guide you to manage your time effectively and converse clearly with the interviewer.

This is a 6-step framework for 45-minute interviews.

- Step 1: Define the problem. Identify the core ML task, and ask clarifying questions to determine the appropriate requirements and tradeoffs. (8 minutes)

- Step 2: Design the data processing pipeline. Illustrate how you’ll collect and process your data to maintain a high-quality dataset. (8 minutes)

- Step 3: Create a model architecture. Come up with a suitable model architecture that would address the needs of the core ML task identified in Step 1 (8 minutes).

- Step 4: Train and evaluate the model. Select a model and explain how you’ll train and evaluate it. (8 minutes)

- Step 5: Deploy the model. Determine how you’ll deploy the model, how it will be served, and how you’ll monitor it. (8 minutes)

- Step 6: Wrap up. Summarize your solution and present additional considerations you would address with more time. (5 minutes)

Step 1: Define the Problem

Begin your ML system design by defining the problem, setting interview parameters, and aligning with the interviewer.

This step gauges your ability to scope problems and pinpoint system requirements. Start by identifying the primary ML problem, and proceed with inquiring about system necessities.

State your learning objectives and the function(s) to achieve them.

Specify the model and datasets needed for your system. Here are common tasks with their typical models and datasets:

- Recommendation: Ranks samples by similarity. Uses collaborative or user-based filtering model and large dataset with (user, item, rating) rows.

- Regression: Predicts a continuous scalar value. Uses a regularized linear regression and a dataset mapping features to a scalar value.

- Classification: Categorizes input into various categories. Uses logistic regression and a dataset mapping features to a category.

- Generation: Outputs new samples conditioned on an input. Uses a neural network and a dataset associating input and output space samples.

- Ranking: Predicts an ordering of elements. Uses a regression model for ranking score and a dataset mapping from (element, element set) to goodness of element.

Step 2: Define Requirements and Tradeoffs

Next, establish the system's goals. This involves identifying the key requirements and tradeoffs of the system.

Consider the following:

- Accuracy and performance: Define the system's minimum accuracy and efficiency. Consider if accuracy can be compromised for performance during traffic peaks.

- Traffic/bandwidth: Estimate the number of simultaneous users and average traffic. Assess traffic distribution and expected Daily Average Users (DAUs).

- Data sources and requirements: Identify available data sources and potential issues like noise or missing values, toxic content, and data privacy or copyright restrictions.

- Computational resources and constraints: Determine available computational resources for model training and serving and the possibility of workload parallelization.

Key Concepts

Review these essential ML concepts before diving into your interview practice.

- Implement an attention mechanism using PyTorch. : Neural network architectures, tensor operations, PyTorch/TF knowledge

- Implement a convolutional filter using PyTorch/TensorFlow. : Neural network architectures, tensor operations, PyTorch/TF knowledge

- Explain what a tokenizer is, why they are needed, and the common types of tokenizers. : Tokenizers, pre-processing, internationalization

- What is a BERT model? : Neural network architectures, masked language modeling, encoders

- What are some differences between BERT-style and GPT-style models? : Encoder versus decoder models, causal versus bidirectional training objectives, auto-regressive decoding

- What is in-context learning? : Gradient-free learning, few-shot inference, prompting

- Explain the bias-variance tradeoff. : Bias-variance decomposition, regularization

- Explain how you would evaluate an LM before deploying it to a product. : Evaluation of metrics, precision vs. recall, ID vs. OOD evaluation, dataset splits, fairness/bias

- What are the differences between stochastic gradient descent, mini-batch gradient descent, and gradient descent? : If/when is each guaranteed to converge? Gradient descent, batching, optimization

- What are some types of adaptive optimizers? : Optimization, Adam, Adagrad, Adadelta, Adabound, etc.

- Describe some metrics you would track while pre-training a large LM, and explain what each metric tells you. : Evaluation metrics, underfitting vs. overfitting

- Design a machine-learning system for classifying emails as spam or ham. : Classification models, evaluating classification models

- What distinguishes a Transformer from a recurrent neural network (RNN)? : Attention, recurrence, encoder/decoder models

- Describe how you would evaluate a trained model's out-of-distribution (OOD) generalization. : Generalization, evaluation

Preparing for Machine Learning Interviews

Machine learning interviews tend to be area-specific, meaning that ML engineers/research scientists are often hired for specific teams rather than as generalists.

To prepare for such interviews, research the team you are interviewing for. Look at their recent products/features and published research papers/blog posts. Skim through a few top papers in the current ML literature in their sub-field to have a good idea of the current state-of-the-art.

To prepare for coding interviews, brush up on fundamentals in your ML framework of choice. Most ML start-ups and large companies outside of Google use Python and PyTorch nowadays, so you should aim to be proficient in that.

PyTorch has excellent tutorials that cover data loading, training loops, neural network architecture implementations, and reinforcement learning. Most companies use a wrapper on top of PyTorch, such as HuggingFace transformers, which have useful online courses covering the essentials of their transformers, datasets, and metrics libraries.

Looking at their examples can be helpful, too, so you know how to implement real-life ML applications with their frameworks.

For ML systems design interviews, look at multiple examples of different ML problems. Online courses such as Stanford's CS 329S and Chip Huyen's Machine Learning Systems Design cover essential topics for ML system design, including data collection/pre-processing, training/inference infrastructure, monitoring, and evaluation.

Once you have covered the fundamentals, read the recent papers that top industry labs have released for their applied ML systems, such as the YouTube ranking system or the TikTok recommendation algorithm.

It is a lot of information to cover, so focus on getting a high-level intuitive understanding before focusing on implementation details. Understand why certain design decisions were made and how to generalize the same problem-solving techniques to other problems.

For example, how would you generalize a 2-class SVM to a multi-class SVM? Or, how might you apply a collaborative recommendation system to a book recommendation website instead of Spotify, which is a more classic setting?

Book time with a Machine Learning Engineer coach

- Mock interviews

- Career coaching

- Resume review

Learn everything you need to ace your machine learning interviews.

Exponent is the fastest-growing tech interview prep platform. Get free interview guides, insider tips, and courses.

Create your free accountRelated Courses

Machine Learning Engineer Interview Prep

Related Blog Posts

How to Become a Machine Learning Engineer

How to Become a Machine Learning Engineer

ML Engineer Resume Guide and Templates