This is a breakdown of machine learning system design interviews.

During these interviews, you will design a system from start to finish, including:

- preprocessing the data,

- training and evaluating the model,

- and deploying the model.

These questions assess your ability to consider real-world aspects of productionizing an ML model, such as:

- efficiency,

- monitoring,

- preventing harmful model outputs,

- and building inference infrastructure.

They also test your ability to model a business problem as an ML problem.

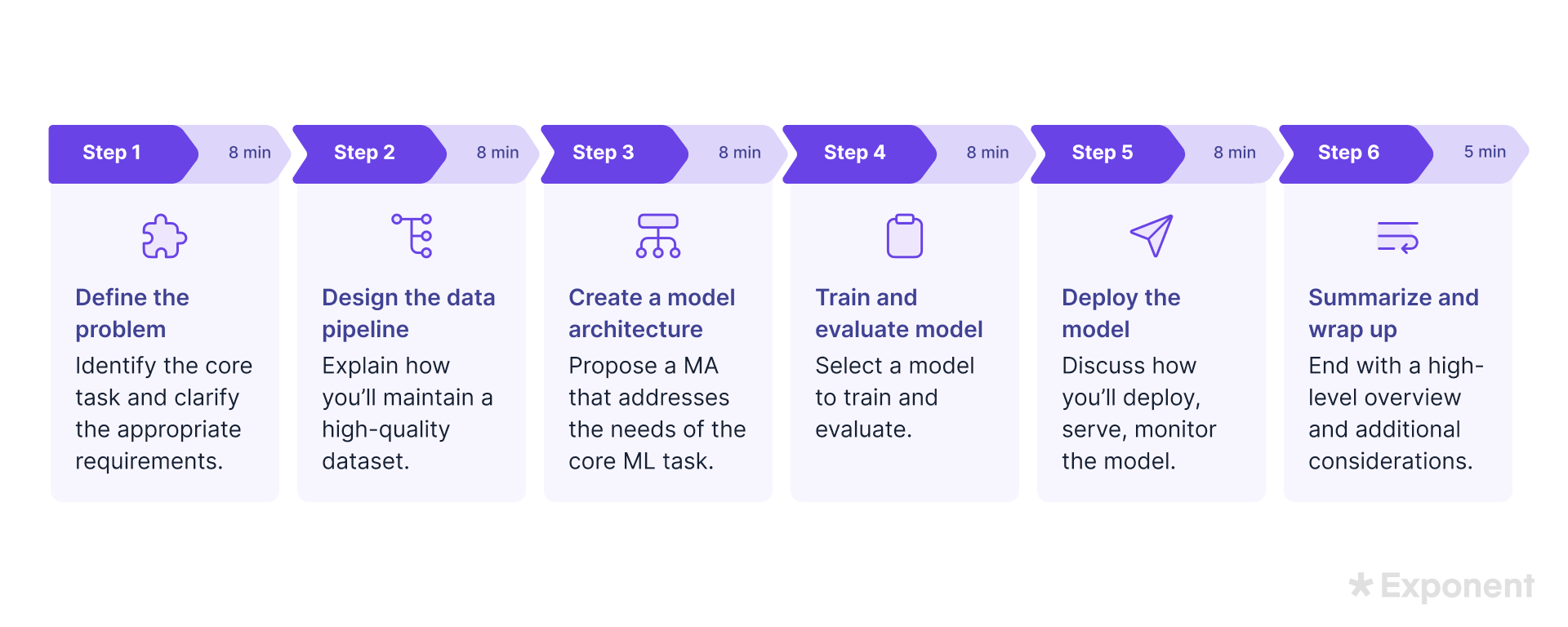

ML System Design Framework

ML system design interview questions are challenging because they require you to synthesize many ML concepts into a working solution.

You have the added pressure of working within a limited time frame.

A framework helps you stay focused, budget your time strategically, and communicate with the interviewer.

- Step 1: Define the problem. Identify the core ML task and ask clarifying questions to determine the appropriate requirements and tradeoffs. (8 minutes)

- Step 2: Design the data processing pipeline. Illustrate how you’ll collect and process your data to maintain a high-quality dataset. (8 minutes)

- Step 3: Create a model architecture. Come up with a suitable model architecture that would address the needs of the core ML task identified in Step 1. (8 minutes)

- Step 4: Train and evaluate the model. Select a model and explain how you’ll train and evaluate it. (8 minutes)

- Step 5: Deploy the model. Determine how you’ll deploy the model, how it will be served, and how to monitor it. (8 minutes)

- Step 6: Wrap up. Summarize your solution and present additional considerations you would address with more time. (5 minutes)

Step 1: Define the Problem

Begin your ML system design by defining the problem, setting interview parameters, and aligning with the interviewer.

This step gauges your ability to scope problems and identify system requirements.

Specify the model and datasets needed for your system:

- Recommendation: Rank samples by similarity. Use a collaborative or user-based filtering model and a large dataset with (user, item, rating) rows.

- Regression: Predict a continuous scalar value using regularized linear regression and a dataset mapping features to a scalar value.

- Classification: Categorize input into various categories. Use logistic regression and a dataset mapping features to a category.

- Generation: Output new samples conditioned on an input. Use a neural network and a dataset associating input and output space samples.

- Ranking: Predict the order of elements. Use a regression model for ranking score and a dataset mapping from an element or element set to the element's goodness.

💬 In the Spotify example, you could say:

"We are trying to build an ML-based recommender system on Spotify that recommends artists to users based on their liked playlists, songs, and artists.

The success of this system will depend on user engagement, which is defined by number of clicks. If a user clicks on a recommendation, that's a point towards the algorithm. If they don't, then we can agree it was a bad recommendation.

We can go deeper and assess the amount of time they engaged with the recommendation, but to keep things simple for now, let's go with just a click."

Establish the system's goals.

Identify requirements and potential tradeoffs. Consider:

- Accuracy and performance: Define the system's minimum accuracy and efficiency. Can accuracy be compromised for performance during traffic peaks?

- Traffic/bandwidth: Estimate the number of simultaneous users and average traffic. Assess traffic distribution and expected Daily Average Users (DAUs).

- Data sources and requirements: Identify available data sources and potential issues, such as noise or missing values, toxic content, and data privacy or copyright restrictions.

- Computational resources and constraints: Determine available computational resources for model training, serving, and the possibility of workload parallelization.

💬 In the Spotify example, you could say:

"I have two clarifying questions:

- First, what kind of raw data do we already have access to? Do we need to collect new data?

- What is the condition of the raw data?

We’ll assume that click data from users will be one data source. The other source will be user metadata, such as age or location.

Understanding the condition of the raw data helps us plan for what pipelines and transformations are needed to convert it into a usable format.

Let’s assume we get click data in a JSON serialized format.

These are usually events that come in and land in an object store. The user metadata is simpler, as it's available directly within the Postgres account table. However, we must remember that it is PII data, so it must be used carefully."

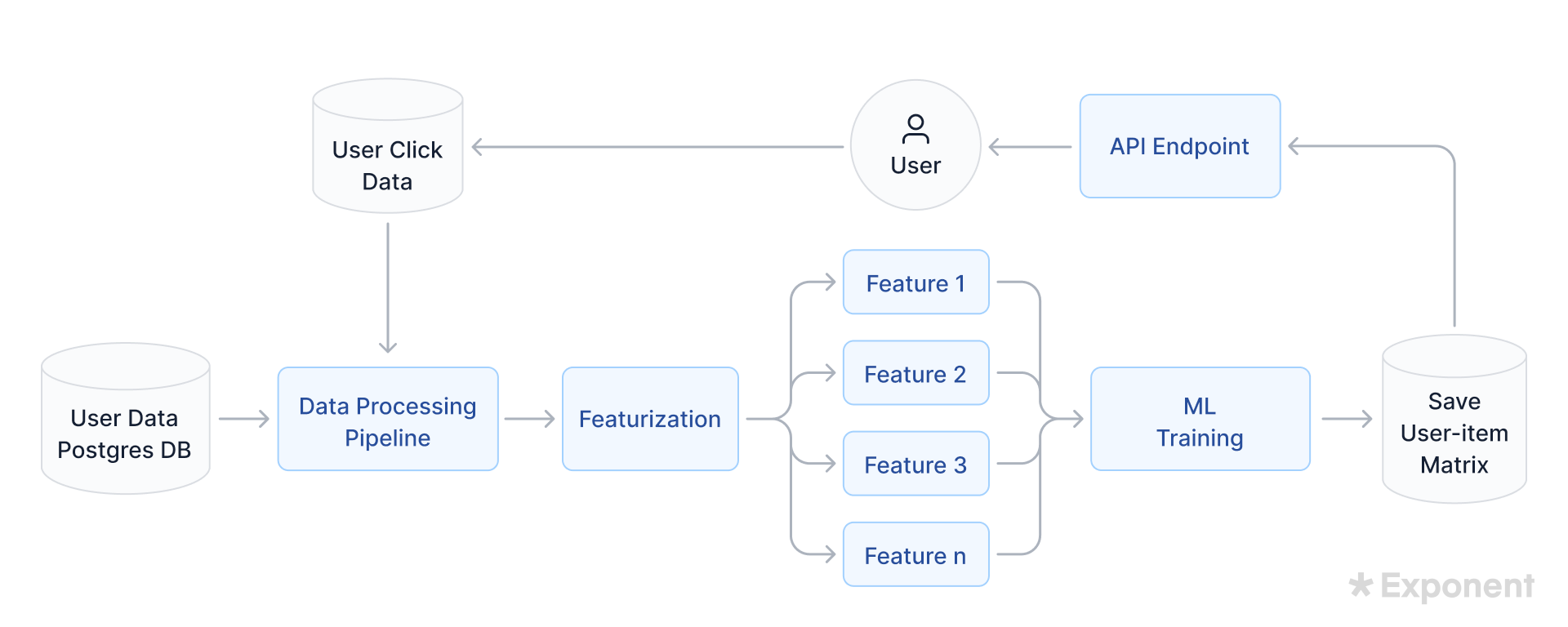

Step 2: Data Processing Pipeline

Designing a data pipeline shows your interviewer that you understand the importance of high-quality data, not just high-quality algorithms.

Show your interviewer that you’re thinking about data quality:

- What kind of data is needed? Numbers, text, images, multimodal, etc.

- How will you collect the data? Programmatic labeling, synthetic data augmentation, human annotation, etc.

- Do you need to do any kind of feature engineering? For example, would it be helpful to pre-compute some features, such as categorizing people’s ages into bins of “adolescent,” “adult,” etc.?

- What kind of data pre-processing do you need to do? Tokenization, normalization, encoding categorical features in numerical form, removing low-quality data, imputing missing values, synthetically augmenting data, etc.

- Are there privacy concerns related to the kind of data you’re using? Can you remove identifying information or apply filtering or pre-processing techniques that induce k-anonymity (for sufficiently large k)?

- How do you ensure that no data contamination is occurring? For example, if your data segments are generated by the same process (the same spammer creates multiple spam emails in the same spam classification dataset), then ensure that those segments are in the same split of your data.

💬 In the Spotify example, you could say:

"Having clarified the data conditions and sources in the previous step, we’re ready to design a data processing pipeline. We’ll use the above two points to create data processing pipelines and fetch what we need to make our features.

Then, we’ll access the raw click data and the Postgres table for the account information. Afterwards, we’ll create our features.

We must decide between a batch-based or real-time solution to collect and process the data. A batch-based system is usually easier to manage, whereas inferencing and training in real-time are compute-intensive and expensive.

It’s usually better to have at least one in batch, preferably the training (as this takes the most time). However, we can do inferencing in real time if needed.

Ideally, both training and inferencing would be in batches. Some serverless jobs would pull the latest recommendations stored by the batch job in a cache. This way, the recommendations are always available but refreshed every few hours.

For this scenario, we’ll use a batch-based system for both training and inferencing.

Since click data is coming in as JSON events and landing in an object store, we’ll design the data pipeline by creating an ETL Pipeline.

We’ll create an abstracted data model to illustrate how we want our data to look before feeding it into the model. Generally, we want our features to be as mutually exclusive as possible because this prevents complicated correlations between features from occurring.

We’ll take the following feature engineering steps:

- Read the data in its raw format

- Deserialize it

- Finalize the first 4 features:

- Age group

- Location (City, State, United States)

- An array of the most recent (100): favorite artists. Each element in the array is a map object with artist info (artist name, active # days, trending rank, genre, number of followers, etc.)

- An array of the last 100 listened-to songs (song name, artist name, active # days, trending rank, genre, number of followers, number of likes, average listen time for song, the standard deviation of listen time for song, etc.) To keep the song length metric simple, we can categorize this into (full, partial, or skipped).

- Fetch the fields from the deserialized JSON records

- Clean them in preparation for feature engineering

- Mask PII (date of birth, full name, emails, etc.)

- Parse location (convert from coordinates to city, state)

- Discard things we don’t need. For example, the only PII we need is the userID and user date of birth to categorize them into an age group

- Normalize fields: convert everything to lowercase, remove spaces and punctuations, remove any noise, deduplication, and format timestamps correctly.

- Fetch the artist and song details from the JSON array to create the arrays of songs/artists mentioned above.

- After cleaning the data, it will land in a Postgres database. The data will contain information on the click event that happened. This information usually comes in the form of card elements on the UI, which contain the song's title, artist, artist ranking, genre of music, song duration, how long the song was played for, volume levels throughout the song, and more.

We’ll store all these features in a new table and then write them to a feature store for model consumption."

Step 3: Model Architecture

Once you've got your data, select and train a suitable ML model. In this step, you need to justify your model choice considering:

- Type of learning problem: What models fit your interview problem’s core ML learning issue?

- Use case: Will this model be used for predictions by another system or interacted with directly by users? Does it require frequent re-training or personalization?

- Simplicity: What's the simplest model that provides enough accuracy?

- Practical constraints: Consider any safety, privacy, storage, and business constraints.

💬 In the Spotify example, you could say:

"Now that we’ve created a data pipeline, we’ll consider the types of models typically used for recommendation systems.

Traditionally, recommendation systems take advantage of data from other users and use that to recommend something to new or even existing users. This is known as collaborative filtering, which has the potential to become a challenge if there is a lack of data from other users.

Additionally, recommendation systems increasingly involve deep learning and traditional supervised techniques like decision trees, XGBoosts, etc.

There’s a massive library of paths to choose from."

Select a model architecture.

Identify suitable model architectures that meet the system requirements, like latency or memory optimization.

Potential architectures for a classification task include logistic regression, a complex neural network, or a search-optimized two-tower architecture.

For example, you might choose a simpler neural network model to improve training performance, even if it affects latency at inference time.

💬 In the Spotify example, you could say:

"To satisfy our current use case, let’s start with a simple architecture. Assuming we have the required data, we’ll move forward with the collaborative filtering element. With music, trends are traditionally developed through mutual sharing between listeners.

The simplest model we can select will create feature vectors for each user. Each feature vector is a unique ID for each user, comprised of a user’s features (age group, location, array of favorite artists maps, array of favorite songs maps).

We’ll score each of these vectors between -1 and 1. This scoring method consolidates the vectors into a single number representing users and their preferences. We’ll also score each item we recommend between -1 and 1, depending on its popularity and number of plays. This allows us to compare users on the same scale (normalization).

We’ll then organize these scores for each user into a user-item matrix. Each user is on a row, and each item is on a column. We’ll then compute the product of each feature vector’s score with the recommended song’s score and set a threshold between -1 and 1.

Depending on how close the product is to 1, we’ll decide whether to recommend that item to the user. We can set the threshold high and vice versa if we want to give particular and limited recommendations.

Generally, starting with a low threshold is better to collect as much information as possible. Then, we can begin to pinpoint the optimal threshold value for future recommendations."

Step 4: Training and Evaluating

Select a model and decide on an optimizer algorithm, metrics for monitoring, and hyperparameters tuning.

Your training plan might change depending on your hardware availability, parallel training jobs, and data and model parameters distribution across multiple devices.

Specific models may allow fine-tuning of pre-trained models instead of training from scratch.

💬 In the Spotify example, you could say:

"To create training inputs, we’ll take the process data, code non-numerical data, and featurize the rest. The training will produce a user-item matrix. This matrix will then create a probabilistic prediction to recommend an item to the user.

The user is then presented with these recommendations. The click data is collected as positive feedback if a user clicks on any recommendation. Any items that have been recommended that were not clicked will be considered negative feedback. The number of clicks over the total number of recommendations is considered the accuracy metric for the model."

Evaluate the model.

Present your evaluation plan to your interviewer, considering where your model will be used and how an incorrect prediction could impact users.

Evaluation standards include:

- Accuracy: F1, precision, recall, confusion matrices, etc.

- Bias: Group fairness, etc.

- Calibration: Aligning the model’s predictions with the probability of correctness.

- Sensitivity/Robustness: Evaluating how minor changes affect a model’s prediction.

- Comparisons against Baselines: Comparing with the simplest model, a random or human baseline.

Discuss the pros and cons of your chosen evaluation metrics, such as how precision@k compares to ndcg@k in a ranking task.

💬 In the Spotify example, you could say:

"Once we’ve established the accuracy metric, we’ll use the features for the positive and negative recommendations to see the difference. This difference will indicate if certain features played a more significant role in affecting user behavior versus the other.

This data can then be used to create a feature weighting algorithm that learns to get better at weighing features.

Consequently, the collaborative filtering algorithm will also improve."

Step 5: Model Deployment

Understanding how components fit into the overall picture is crucial. Address these three key points:

- Deployment Timing: Choose appropriate evaluation metrics and testing strategies for your model on production data, like A/B tests, canary deployment, feature flags, or shadow deployment.

- Model Serving: Decide on the hardware (remote or on the edge), optimize and compile the model (NVCC, XLA), and plan for varying user traffic patterns.

- Monitoring: Post-production monitoring is vital for ML systems. Constantly improve performance and benchmark models. Decide on your ground truth dataset, indicators for model performance regression, and troubleshooting tools.

💬 In the Spotify example, you could say:

"The last step in this process is to understand when and how best to deploy our model into production.

First, we’ll define the appropriate metrics we previously discussed as engagement. Then, we can deploy an A/B test plan for this model to understand if this is the best step for the user experience.

Second, we must understand the compute and storage resources to train, test, validate, and infer the information. Let’s say we are using a cloud system like AWS.

We can take advantage of AWS sagemaker (to house, train, and test the model), lambda (to service requested recommendations), elasticache (to store the recommendations), and provide them back to the application via an API endpoint.

We can then auto-scale the resources to handle changing traffic volumes from the application."

Step 6: Wrap Up

Review the problem scope, data processing pipeline, and how you would train, evaluate, and deploy the model in the last few minutes of the interview.

If there’s time, discuss some of your overall system design's main bottlenecks and tradeoffs.

- Why did you decide that those bottlenecks or tradeoffs would be acceptable?

- How would you scale the system for more data or inference/training requests?

- How would you adjust the model and/or data processing in the future to handle distribution shifts?

Ending with a high-level overview and additional considerations shows the interviewer you have a comprehensive understanding of the system and how to move your ML model into a production environment.

Once you’ve wrapped up, check in with your interviewer to see if they have follow-up questions.

💬 In the Spotify example, you could say:

"To recap, we’ve just designed a high-level system to recommend artists on Spotify.

We first identified our data sources as user metadata and click data. We then opted for a batch-based system to process the data, used a collaborative filtering model to score each user’s feature vectors, and collected click data to train the model.

We then discussed the factors affecting model deployment, such as engagement and compute and storage resources.

The other consideration to shed additional light on is post-production work. Machine learning is very dynamic since incoming data changes constantly. This affects the model and its performance, so monitoring and observing the model, data, and feature drift is important.

Observing the model's performance is essential to ensuring we meet our metric. We can check model performance constantly by observing the metric we are testing against (churn)."

Common Interview Mistakes

These are common mistakes we see candidates make.

Rushing to a solution. Rather than jumping into the design, first analyze the specific problem you’re trying to solve by clarifying the system requirements, the situation's context, the data's scale, etc. Once you develop a baseline model, get the interviewer's input about what pieces to focus on.

Looking for the “right” answer. In most cases, there are no strictly right or wrong answers. Some are better justified than others, and your interviewer expects you to thoroughly justify your answers by explaining why you chose your design over possible alternatives.

Defaulting to state-of-the-art (SotA) models. It's important to check ML benchmark leaderboards to identify the current SotA models for a given task. However, remember that SotA models are often less efficient to train and run inference with (requiring more compute or data).

They're also usually evaluated only on academic benchmarks rather than in real-world settings. Practice building your own models and research other models to have a holistic understanding of the available options.

Overcomplicating the model. Many things can go wrong when training models, so start with a low-capacity, v1 solution. Once you have a v1 solution for the system that works on clean data, expand the model capacity to account for additional complexity (e.g., messy data and corner cases).

Starting with a basic model also budgets time for the interviewer to identify the pieces of the ML design they’d like you to focus on. Those hints show you can collaborate and incorporate feedback on your design.

Overlooking model evaluation and validation. Model selection is just one part of the problem, so budget time for the other steps.

Clarify how you’ll initially validate a model learned from some data (your strategy should involve quantitative and qualitative analysis), and discuss how continual validation will happen (e.g., using a metrics dashboard).

Top ML System Design Interview Questions

In your ML interviews, be prepared to answer a mix of behavioral, coding, conceptual, and system design questions.

Mock Interviews

- Watch: Design an Instagram ranking model. (with Meta MLE)

- Watch: Design a framework for evaluating ads. (with Meta MLE)

- Watch: Design a Spotify artist recommendation model. (with senior MLE)

- Watch: Train a model to detect bots. (with MIT Ph.D)

- Watch: Design a landmark recognition system. (with Amazon MLE)

- Watch: Design an ETA system for a Maps application. (with research engineer).

Practice Yourself

- Practice: Build a fraud detection model for Stripe.

- Practice: Build a recommendation system for online courses.

Other Questions

Here are some real machine learning system design interview questions that other candidates have heard recently.

They include questions asked in FAANG and other top companies.

Recommendation

- Design a product recommendation system. (Meta, Pinterest)

- Design Netflix's "Top Picks" feature. (Meta)

- Design Instagram's Explore page. (Meta)

- Recommend similar artists on Spotify. (Spotify)

- Recommend restaurants on Google Maps.

Ranking

- Design an evaluation framework for ads ranking. (Meta)

- Design a personalized news ranking system. (Meta)

- Design a product ranking system for Amazon shopping.

- Design YouTube Search.

- Design type-ahead search for Amazon Prime.

Natural Language Processing (NLP)

- Detect the language of a text input. (Meta)

- Classify social media posts by topic.

- Design a customer support chatbot.

- Design a fake news detection system. (Meta)

- Design a podcast search engine.

Content Moderation and Filtering

- Design an automated comment moderation system. (Meta)

- Build a system to filter offensive comments.

- Design a spam detection system on Pinterest. (Pinterest)

- Filter duplicate schools on Facebook. (Meta)

- Design a fraud-detection system for Stripe.

Monitoring

- Design an ML monitoring system (drift, performance, outliers, and quality) for a fantasy sports app.

- Design a monitoring system for TikTok. (TikTok)

- Predict user behavior after product updates.

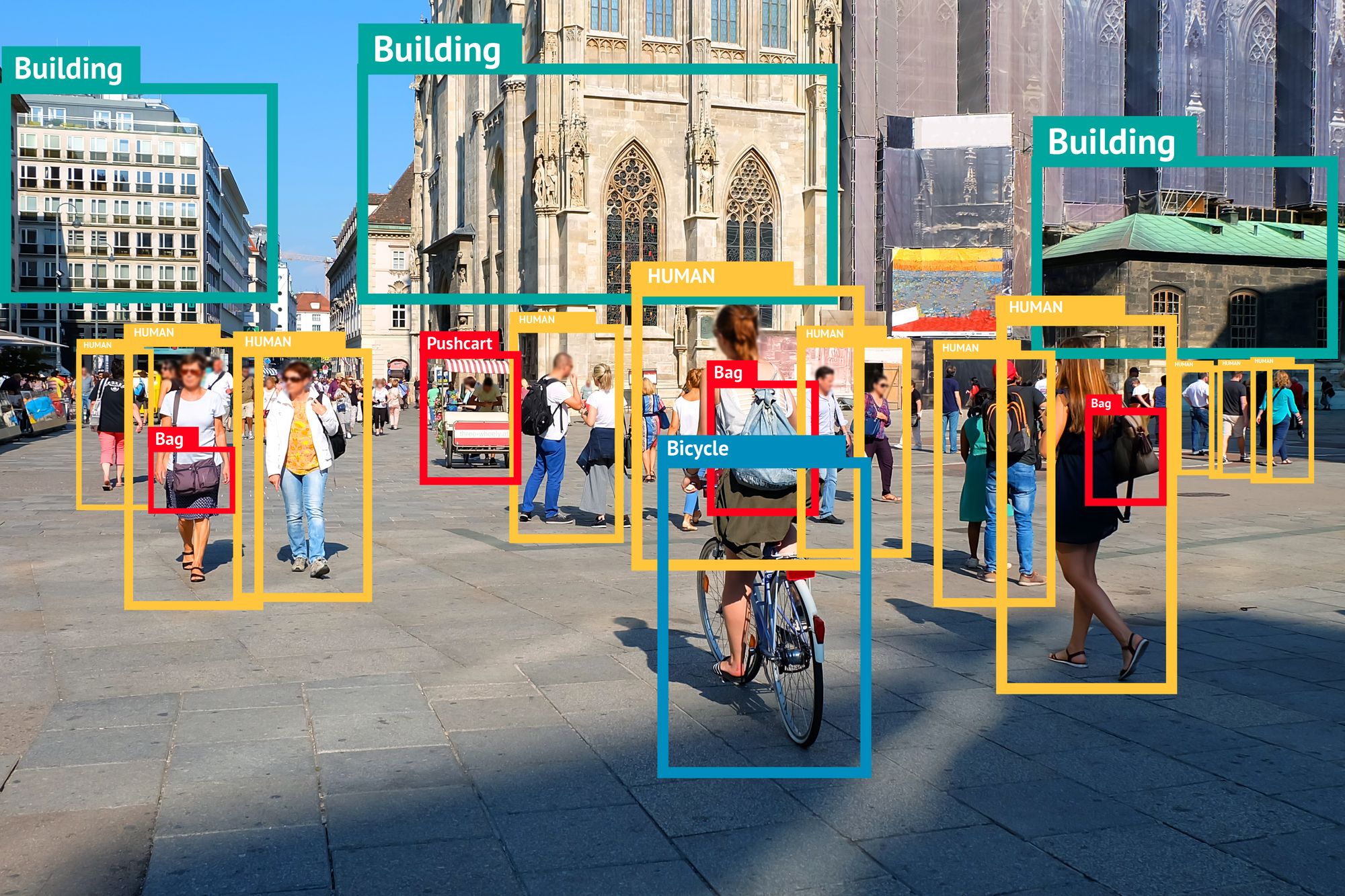

Computer Vision

- Design visual search for Pinterest.

- Design blurring for Google Street View.

- Design a shape-detection system.

- Design an automatic recycling bin.

Time Series and Sequential Data

- Design a system to communicate with a bank. (Capital One)

- Design a machine learning system that makes stock predictions from Reddit comments. (JP Morgan Chase)

Personalization

- Design TikTok's "For You" page. (TikTok)

- Design a "people you may know" system.

Ad Targeting

- Design YouTube advertising. (Meta)

- Design an evaluation framework for ads ranking. (Meta)

Interview Tips

It is impossible to cover all the possible questions since machine learning system design is a broad and varied topic!

However, hopefully, this guide has given you what to expect in your interviews.

- Explore our machine learning engineering interview course's dozens of mock interviews and practice lessons.

- Schedule a free mock interview session to practice answering questions with peers.

- Get interviewing coaching from MLEs at top companies.

Good luck with your upcoming machine-learning interview!

Book time with a Machine Learning Engineer coach

- Mock interviews

- Career coaching

- Resume review

Learn everything you need to ace your system design interviews.

Exponent is the fastest-growing tech interview prep platform. Get free interview guides, insider tips, and courses.

Create your free accountRelated Courses

Machine Learning Engineer Interview Prep

Related Blog Posts

Complete Guide to Machine Learning Engineering Interviews

How to Become a Machine Learning Engineer